You are working with the text-only light edition of "H.Lohninger: Teach/Me Data Analysis, Springer-Verlag, Berlin-New York-Tokyo, 1999. ISBN 3-540-14743-8". Click here for further information.

You are working with the text-only light edition of "H.Lohninger: Teach/Me Data Analysis, Springer-Verlag, Berlin-New York-Tokyo, 1999. ISBN 3-540-14743-8". Click here for further information.

You are working with the text-only light edition of "H.Lohninger: Teach/Me Data Analysis, Springer-Verlag, Berlin-New York-Tokyo, 1999. ISBN 3-540-14743-8". Click here for further information. You are working with the text-only light edition of "H.Lohninger: Teach/Me Data Analysis, Springer-Verlag, Berlin-New York-Tokyo, 1999. ISBN 3-540-14743-8". Click here for further information.

|

| See also: model finding, establishing ARIMA models |   |

ARIMA (auto-regressive integrated moving average) models establish a powerful class of models which can be applied to many real time series. ARIMA models are based on three parts: (1) an autoregressive part, (2) a contribution from a moving average, and (3) a part involving the first derivative of the time series:

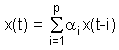

The auto-regressive part (AR) of the model has its origin in the theory that individual values of time series can be described by linear models based on preceding observations. For instance: x(t) = 3 x(t-1) - 4 x(t-2). The general formula for describing AR[p]-models (auto-regressive models) is:

The consideration leading to moving average models (MA models) is that time series values can be expressed as being dependent on the preceding estimation errors. Past estimation or forecasting errors are taken into account when estimating the next time series value. The difference between the estimation x(t) and the actually observed value x(t) is denoted e(t). For instance: x(t) = 3 e(t-1) - 4 e(t-2).

The general description of MA[q]-models is:

![]()

When combining both AR and MA models, ARMA models are obtained. In general, forecasting with an ARMA[p,q]-model is described using the following equation:

![]()

After additional differentiation of the

time series, and integrating it after application of the model,

one speaks of ARIMA models. They are used when trend filtering is required.

The parameter d of the ARIMA[p,d,q]-model determines the number of differentiation

steps.

Last Update: 2004-Jul-03