You are working with the text-only light edition of "H.Lohninger: Teach/Me Data Analysis, Springer-Verlag, Berlin-New York-Tokyo, 1999. ISBN 3-540-14743-8". Click here for further information.

You are working with the text-only light edition of "H.Lohninger: Teach/Me Data Analysis, Springer-Verlag, Berlin-New York-Tokyo, 1999. ISBN 3-540-14743-8". Click here for further information.

You are working with the text-only light edition of "H.Lohninger: Teach/Me Data Analysis, Springer-Verlag, Berlin-New York-Tokyo, 1999. ISBN 3-540-14743-8". Click here for further information. You are working with the text-only light edition of "H.Lohninger: Teach/Me Data Analysis, Springer-Verlag, Berlin-New York-Tokyo, 1999. ISBN 3-540-14743-8". Click here for further information.

|

| See also: selection of variables, backward selection, growing neural networks |   |

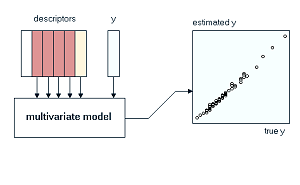

Generally speaking, forward selection is a method to find the "best" combination of variables by starting with a single variable, and increasing the number of used variables, step by step. Which variables to add is decided according to some criteria, which may vary from method to method. For linear regression the partial F values are usually used.

The method is started by first selecting the variable which results in the best fit for the dependent variable Y. Next, this variable is used to test all combinations with the remaining variables in order to find the "best" pair of variables. In all further steps, additional variables are added until either all variables are used up, or some stopping criterion is met (i.e. the partial F value falls below a certain limit).

Note that the forward selection does not necessarily find the best combination

of variables (out of all possible combinations). However, it will result

in a combination which comes close to the optimum solution.

Click at the figure above to start the visualization of the forward

selection.

Last Update: 2004-Jul-03